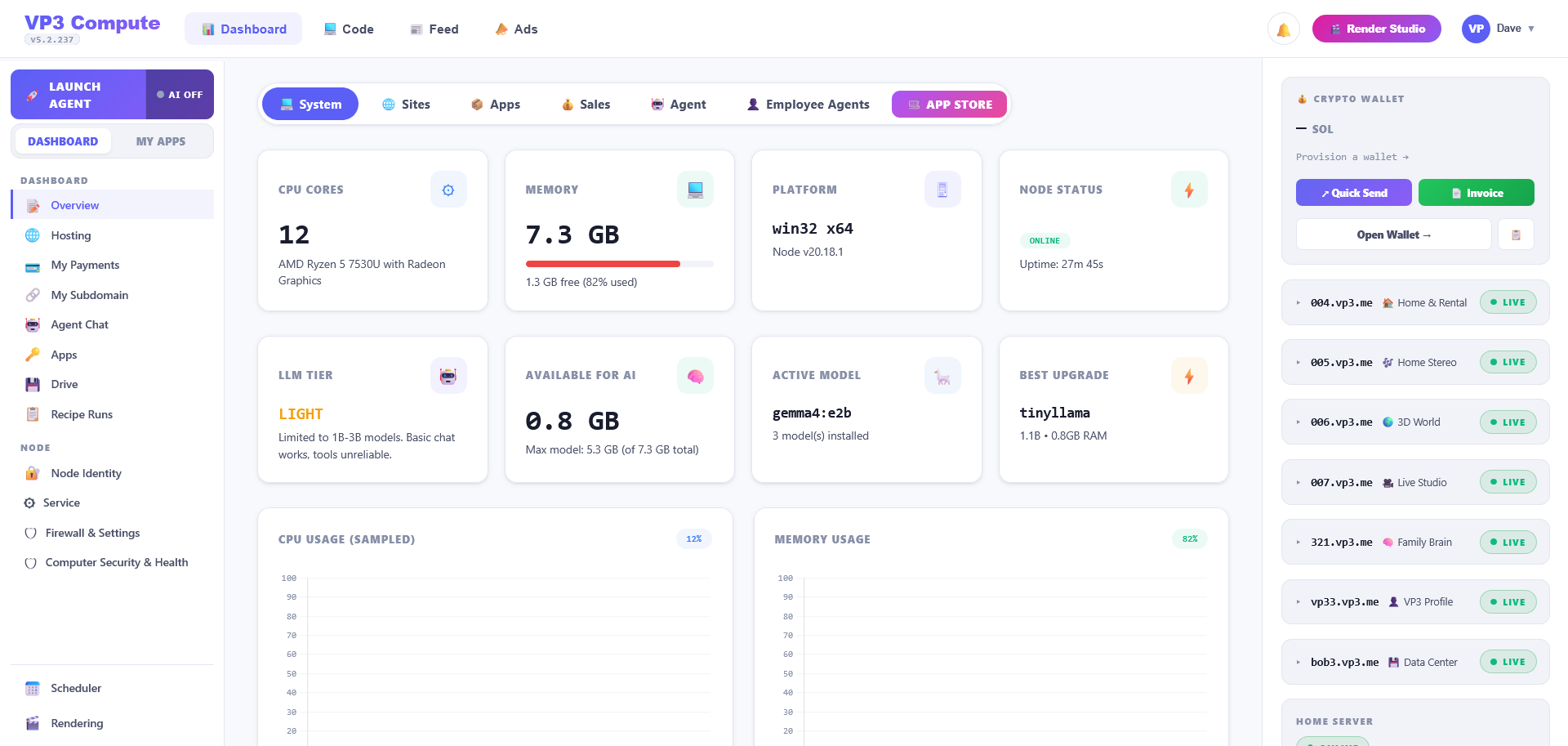

Everything a SaaS would charge for. Running on your machine.

A single binary that bundles eight subdomain apps, local AI inference, encrypted SQLite storage, video rendering, push notifications, and a Cloudflare tunnel. Configured by clicking checkboxes — no Docker, no YAML.